Introduction

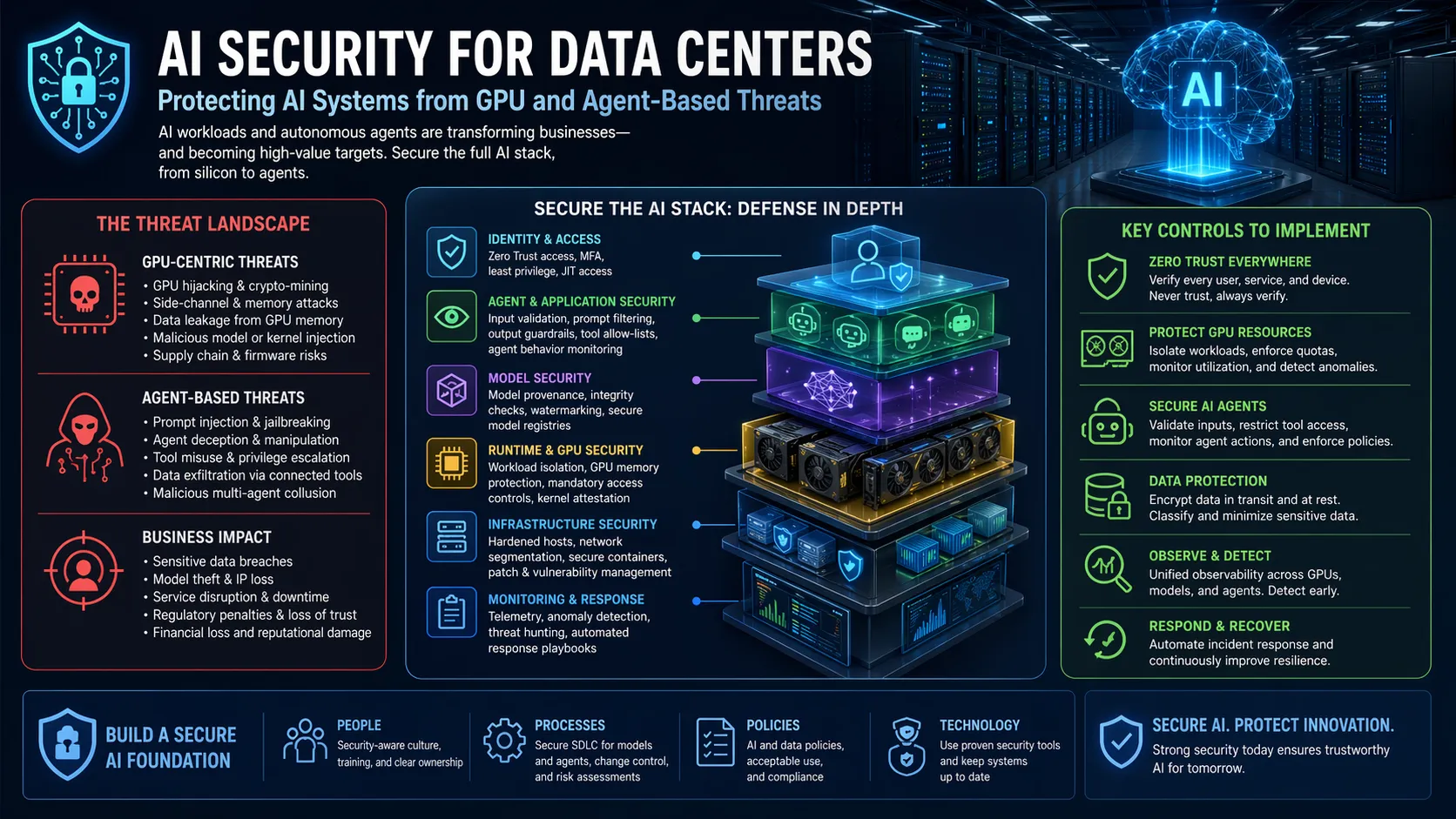

Modern AI workloads have fundamentally changed what a data center must protect. Static perimeter models built for predictable applications cannot secure fast-moving pipelines, shared GPU fleets, and distributed compute environments. AI security for data centers now requires a complete rethinking of segmentation, access governance, and threat detection. Without dedicated AI security for data centers, organizations leave training data, model weights, and inference pipelines exposed. This article explains how AI security for data centers works, where traditional controls fail, and what practitioners must prioritize today.

Why AI Workloads Break Traditional Security Models

Traditional data centers assumed predictable traffic flows and centralized control. AI workloads do the opposite. Training pipelines constantly move datasets across racks, zones, and clouds during ingestion, preprocessing, training, and validation. This continuous data movement creates an attack surface that legacy firewalls and static boundaries were never designed to handle.

AI environments concentrate highly sensitive data—personal information, behavioral analytics, operational telemetry, and proprietary documents—into training pipelines. A single compromise reveals far more than in conventional workloads. High-density GPU clusters introduce new lateral movement paths, and when isolation relies only on software constructs like Kubernetes namespaces, an attacker who compromises one workload may reach others on the same physical host.

Organizations increasingly report that AI pipelines move data so extensively that traditional controls lose visibility entirely. What worked for CPU-based applications fails when GPUs and distributed frameworks become the norm.

GPU Vulnerabilities as the New Critical Attack Surface

GPUs are not just faster processors. They introduce distinct security challenges that CPU-centric defenses miss. In January 2025, NVIDIA disclosed seven vulnerabilities affecting GPU display drivers and virtual GPU software, impacting millions of systems from enterprise AI infrastructure to cloud platforms. CVE-2025-23266, a critical flaw in the NVIDIA Container Toolkit, allowed malicious actors to bypass isolation and gain root access to host systems. Three lines of malicious code in a Docker image could compromise an entire Kubernetes node.

The GPUBreach attack, disclosed in April 2026, exploits Rowhammer-style bit flips in GPU GDDR6 memory to escalate privileges and take full system control. This hardware-level vulnerability affects systems running NVIDIA GPUs in shared cloud environments and AI development clusters. In multi-tenant GPU deployments, a single tenant’s malicious workload can degrade model accuracy from 80% to less than 1% or extract residual data from another tenant’s processing.

These vulnerabilities expose a hard truth: GPU security cannot be an afterthought. Organizations must treat GPU infrastructure as a primary attack vector, not just as compute acceleration.

The Rise of Agentic AI Threats

AI-powered attacks have moved from theoretical to operational. The Anthropic disclosure in late 2025 documented the first AI-orchestrated cyber-espionage campaign, where agentic AI models performed reconnaissance, vulnerability discovery, lateral movement, exploitation, and exfiltration at machine speed. Attackers engineered benign prompts to iterate attack phases, bypassing model guardrails entirely.

AI agents can now operationalize the full attack lifecycle faster than any human team can respond. These advances render canonical segmentation and perimeter models ineffective. Routine data center operations provide cover for adversarial actions, and the attack surface shifts dynamically as autonomous agents adapt in real time.

This changes the defender’s calculus. You cannot outpace machine-speed reconnaissance with manual reviews or static rules. AI security for data centers must assume that adversaries will use AI against your infrastructure.

Zero Trust Architecture as the Only Viable Foundation

Zero trust architecture has moved from optional to mandatory. For AI environments, zero trust means assuming breach, verifying every request, and minimizing blast radius. Traditional security defined who is trusted. Confidential computing minimizes who must be trusted by shifting the security boundary to hardware-enforced Trusted Execution Environments (TEEs).

Hardware-rooted zero trust ensures that data and models remain cryptographically protected throughout execution. Even infrastructure administrators with full system privileges cannot access data or model weights inside the enclave. This matters enormously for regulated industries where sensitive data must be protected while in use.

The architecture follows a strict remote attestation workflow. An external attestation service evaluates this evidence. Only after successful validation does a key broker service release decryption keys directly into protected memory. The model and data remain encrypted until safely inside the TEE, never exposed to host infrastructure.

Data Security Posture Management (DSPM) for AI Workloads

Visibility remains the weakest link in most AI security programs. DSPM addresses this by classifying data sensitivity and tracking data as it moves across environments. Unlike traditional Cloud Security Posture Management, which focuses on infrastructure vulnerabilities, DSPM identifies exposed personally identifiable information, developer secrets, and privileged data with inadequate security measures.

For AI workflows, DSPM answers three critical questions: what data is involved, where it flows, and who—or what—touches it. Security teams can calibrate risk in real time rather than relying on assumptions. DSPM provides agentless scanning of cloud storage, flagging issues like publicly accessible sensitive data or unencrypted databases before they become breaches.

Without DSPM, AI data pipelines become black boxes. Security teams cannot enforce controls they cannot see.

GPU Multi-Tenancy Isolation Strategies

Multi-tenant GPU environments introduce attack surfaces fundamentally different from CPU-based virtualization. The NVIDIA Multi-Instance GPU technology provides hardware-level isolation, partitioning a single GPU into up to seven separate instances with dedicated memory system paths. One tenant cannot read or overwrite another tenant’s GPU memory. Fault isolation prevents crashed code from affecting the whole GPU.

However, hardware isolation alone is not enough. Container-native isolation creates lightweight boundaries around the entire container launch process, including privileged tooling. When a workload lands on a node, the runtime spawns a hardened container—a virtual node—and runs the entire container lifecycle inside it. Even if an attacker breaks out of their container, they land in an isolated environment with no tooling, no shell, and no privilege escalation path.

Organizations running shared GPU infrastructure without hardware-enforced multi-tenancy are accepting risk they may not fully understand. The 2026 GPUBreach disclosure should serve as a wake-up call.

Question for readers: Does your team know which tenants share GPU memory on your infrastructure today, and have you tested the isolation boundaries under adversarial conditions?

Securing the AI Supply Chain and Regulatory Compliance

The AI supply chain introduces risks that many organizations overlook. Compromised pre-trained models, poisoned training data, and vulnerable dependencies in AI frameworks all serve as potential back doors. Recent NPM hacks and emerging risks in AI models underscore the need for a “verify, then trust” approach.

In May 2025, cybersecurity agencies from the US, UK, Australia, and New Zealand published joint guidance on securing data used to train and operate AI systems. The guidance covers secure design, development, deployment, and maintenance across the entire AI lifecycle. Key recommendations include cryptographic hashing to verify data integrity and anomaly detection to identify harmful data before model training.

Regulatory pressure is rising. The EU AI Act, state-level AI measures in the US, and frameworks like NIST AI Risk Management and ISO 42001 all demand traceability and control over AI data flows. Organizations building internal AI platforms in regulated sectors must map security controls directly to compliance requirements.

East-West Traffic Encryption and Microsegmentation

AI workloads transfer huge volumes of data internally during ingestion, preprocessing, and training. Most data centers still do not encrypt east-west traffic, assuming internal networks are inherently trusted. That assumption no longer holds. In an AI environment, compromise of a single container or node enables attackers to capture unencrypted internal traffic, perform man-in-the-middle attacks, or tamper with data in transit.

Microsegmentation limits east-west movement inside Kubernetes clusters and isolates compromised containers before problems spread across inference environments. Reference architectures now deploy firewall and threat prevention functions directly on data processing units, inspecting traffic without drawing on CPU or GPU resources used for AI workloads.

If your internal traffic flows unencrypted, an attacker who breaches any single workload gains visibility across your entire AI pipeline. That is an unacceptable risk posture for production AI infrastructure.

Practical Next Steps for AI Security Programs

Begin with visibility. Map every data flow across training, inference, and validation pipelines. Identify where sensitive data resides and who can access it. Then enforce hardware-rooted zero trust for your most critical workloads, starting with confidential computing for regulated data. Implement microsegmentation to contain east-west movement and encrypt all internal traffic by default. Finally, test your defenses with adversarial simulations that assume AI-powered attackers.

Consider evaluating GPU isolation technologies, DSPM tools, and confidential computing platforms. Prioritize controls that provide measurable risk reduction over those that merely check compliance boxes.

Conclusion

AI security for data centers is not a feature set. It is a foundational requirement that determines whether organizations can safely scale AI without exposing their most valuable assets. Traditional models are obsolete. GPU vulnerabilities are real and present. Agentic threats are already operational. The path forward requires hardware-rooted zero trust, continuous visibility through DSPM, and isolation strategies designed specifically for GPU architectures. Organizations that modernize now will secure their AI future. Those that wait will clean up the consequences later.

Evaluate your AI infrastructure’s security posture today—before an adversary does it for you.

FAQs

What is the biggest security risk in AI data centers today?

Shared GPU infrastructure without hardware-enforced isolation. Vulnerabilities like CVE-2025-23266 and GPUBreach allow attackers to break out of containers and access other tenants’ data or take full system control.

How does confidential computing protect AI workloads?

It keeps data and models encrypted during processing using hardware-enforced Trusted Execution Environments. Even infrastructure administrators cannot access the plaintext data inside the enclave.

Do traditional firewalls work for AI data centers?

No. East-west traffic dominates AI pipelines, and traditional perimeter firewalls do not inspect internal flows. You need microsegmentation and east-west encryption.