Introduction

Cloud 3.0 is not merely an upgrade to existing virtual machines or serverless functions. It represents a fundamental change in the relationship between an application and the infrastructure it runs on. After two decades of cloud evolution, we have moved past the era of simple cost savings and entered a phase defined by intelligent autonomy, distributed architecture, and AI-native operations.

Cloud 3.0 is the answer to a specific problem that Cloud 2.0 could not solve. While the previous generation optimized resources (CPUs, storage, networking), this new generation optimizes outcomes. For the informed tech professional in the UK or US, understanding this distinction is critical to avoiding architectural debt. Cloud 3.0 requires us to stop thinking about where data lives and start focusing on how it is governed, processed, and acted upon in real-time.

Defining the Primary Entity (Cloud 3.0)

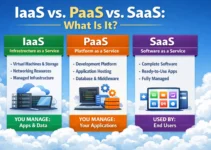

Cloud 3.0 is defined by the shift from a “resource-centric” view to an “application-centric” and “data-centric” view. In Cloud 1.0, we virtualized hardware (IaaS). In Cloud 2.0, we orchestrated containers (PaaS/Kubernetes). In Cloud 3.0, the infrastructure is invisible. It is an intent-driven system where developers declare the what (latency requirements, data sovereignty rules, and AI training needs), and the infrastructure automatically manages the how.

This is not a theoretical concept. Google defines Cloud 3.0 as the state where you blur the boundaries between servers to create one big compute resource, dropping applications into the cloud without manual load balancing or job placement. Similarly, enterprises like Unilever view it as the enabler of AI-powered digital twins across 270 factories, collapsing the distance between consumer signals and product creation.

The Evolution: From Lift-and-Shift to AI-First

To establish authority, one must respect the past. Cloud 3.0 did not emerge in a vacuum; it is a direct reaction to the failures of previous models.

- Cloud 1.0 (The Infrastructure Era): This was about “lift and shift.” Companies moved VMs to AWS or Azure to save on data center costs. Agility was low, and architecture was rigid.

- Cloud 2.0 (The Platform Era): Dominated by containers (Docker) and orchestration (Kubernetes). This broke monoliths into microservices. While it offered portability, it introduced massive complexity in networking, storage, and state management. It was still largely centered on the IT administrator.

- Cloud 3.0 (The Intelligent Era): This is where AI becomes a first-class citizen. The architecture assumes that every application will have a machine learning component. It prioritizes Geopatriation (the return of workloads to specific jurisdictions) and Edge-First connectivity over centralized data gravity.

Geopatriation and Sovereign Cloud

For UK and USA enterprises, especially in finance and healthcare, Cloud 3.0 solves the “Paradox of Sovereignty.” In the Cloud 2.0 world, data wanted to be everywhere for redundancy. In Cloud 3.0, data is heavily regulated.

Geopatriation is the strategic move of bringing specific high-value applications back from global public clouds to national or private infrastructure. It is not a rejection of the cloud but a refinement of it. We are seeing the rise of “Sovereign Enclaves”—cloud instances that obey local laws (GDPR, CCPA) without sacrificing the ability to run AI workloads. In Cloud 3.0, you don’t move data to the compute; the compute moves to the data, wherever it legally resides.

The “Invisible Backend” and Serverless 2.0

For developers, Cloud 3.0 is defined by the eradication of the cold start. Early serverless (FaaS) was slow and stateless. Serverless 2.0 leverages WebAssembly (Wasm) to achieve near-native execution speeds with microsecond startup times.

This is the “Invisible Backend.” Traditional observability tools are replaced by intent-driven provisioning. An engineer simply expresses a need: I need a low-latency environment for 5,000 concurrent users in London.”* The Cloud 3.0 backbone autonomously assembles the networking, compute, and security policies to fulfill that intent. You are no longer writing YAML files for deployment; you are defining outcomes.

The Multi-Cloud Reality Check

Vendors have sold “multi-cloud” for a decade, but Cloud 3.0 makes it actually usable. In the past, multi-cloud meant different teams managing different vendors. In Cloud 3.0, abstraction layers smooth out the differences between AWS, Azure, and Google Cloud Platform (GCP).

However, the challenge remains data gravity. Moving petabytes of training data between clouds is cost-prohibitive. Cloud 3.0 architectures solve this through a “Data Fabric,” which provides a unified access layer. The application queries the fabric, and the fabric routes the request to the correct cloud (AWS for training, Azure for compliance) without the developer needing to manage multiple API keys or SDKs.

The Energy and Hardware Nexus

A practical driver of Cloud 3.0 is physics. AI consumes massive power. Cloud 3.0 introduces Energy-Aware Scheduling. If you are running a batch training job, the orchestrator will route that job to a data center in a region where renewable energy is currently abundant and cheap (e.g., a sunny day in Spain or a windy night in Scotland).

Furthermore, the architecture now accounts for Liquid-Cooled Modular Racks and hardware heterogeneity. Cloud 3.0 applications are aware if they are running on a CPU, GPU, or TPU and adjust their processing accordingly. This is not just about speed; it is about the economics of sustainability.

The Developer Experience (DX) Shift

Ask yourself: How much time does your team spend “keeping the lights on” versus building features?

In Cloud 3.0, operations are autonomous. The platform uses AI to predict workload spikes and auto-scale resources before the traffic hits. It also automates “right-sizing,” meaning it continuously analyzes resource usage and downgrades over-provisioned services without human intervention.

A thoughtful question for the reader: Is your current Kubernetes expertise a career asset, or is it becoming a legacy skill as infrastructure moves toward intent-based automation?

Security and Zero Trust

Finally, Cloud 3.0 finally enforces Zero Trust architecture at scale. Because the network is assumed to be hostile and the “perimeter” is dead, Cloud 3.0 platforms bake security into the pipeline. Posture Management is continuous. If a container image has a vulnerability, the Cloud 3.0 platform automatically routes traffic away from it and spins up a patched version without a deployment ticket. Governance is not a roadblock; it is code.

Conclusion

Cloud 3.0 marks a pivotal “Year of Truth” for infrastructure. It signals the end of the “lift and shift” era and the beginning of intelligent distribution. For businesses, the competitive advantage no longer comes from having a cloud strategy but from having an autonomous cloud strategy. The winners will be those who master the complexity of distributed sovereignty and AI orchestration. Look past the hypervisors and look at the data fabric—that is where the next decade of engineering will be won. Review your current architecture for vendor lock-in and begin testing intent-based orchestration tools today.

FAQs

Is Cloud 3.0 just a marketing term from vendors like Salesforce?

No. While the term is used in marketing, the underlying shift toward AI-native, distributed, and sovereign infrastructure is a genuine technical response to the limitations of Kubernetes and centralized public cloud models.

How does Cloud 3.0 solve the “cold start” problem?

Cloud 3.0 utilizes WebAssembly (Wasm) and predictive pre-warming. Unlike traditional containers, WebAssembly modules start in microseconds and maintain persistent execution contexts, making serverless viable for latency-sensitive applications.

Does Cloud 3.0 mean I should leave AWS or Azure?

No. Cloud 3.0 is about abstraction, not replacement. It allows you to use AWS, Azure, and on-premises resources simultaneously. It focuses on moving the workload to the most efficient location (cost, legal, latency) rather than locking you into one vendor.