Introduction

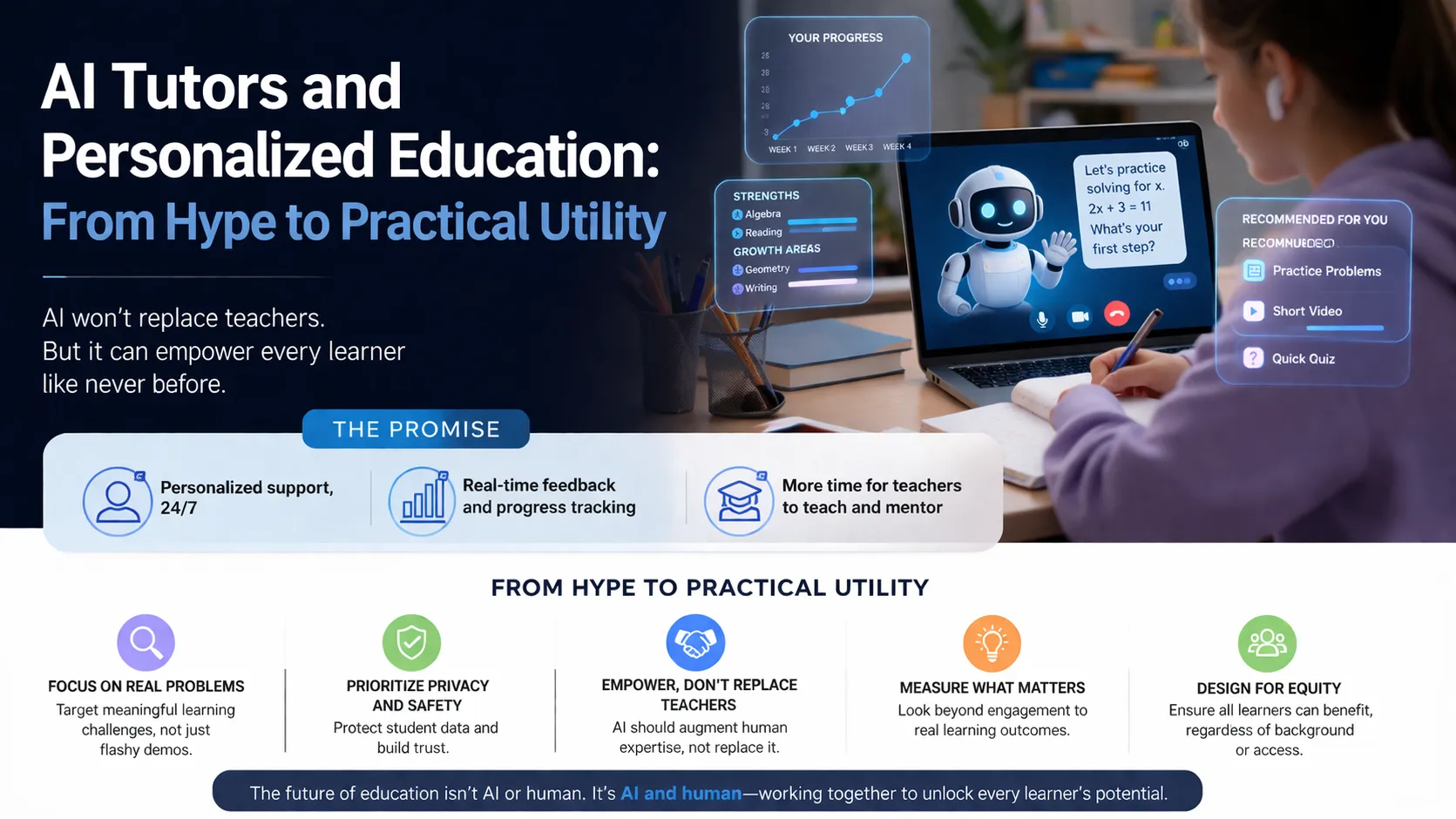

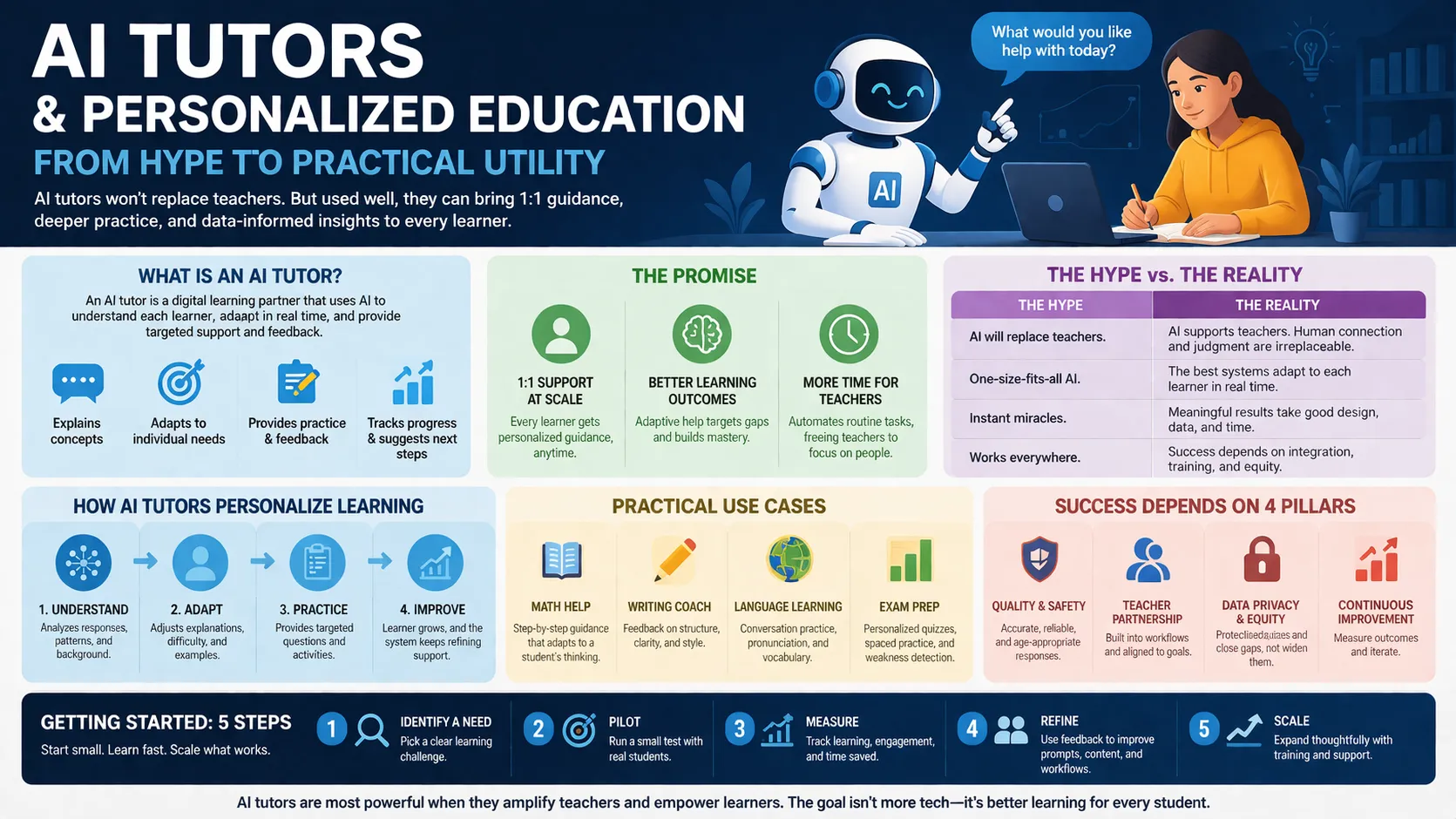

The conversation around AI tutors has shifted. We are no longer asking if generative AI can teach, but rather how AI tutors and personalized education should be deployed to support scalable, individualized learning without breaking existing pedagogical frameworks. In the UK and US markets, where educational technology adoption is high but teacher shortages persist, AI tutors and personalized education are transitioning from experimental chatbots to governed, curriculum-aligned systems.

This analysis moves beyond the speculative promise of artificial intelligence in classrooms. It focuses on the mechanical reality of AI tutors and personalized education: how these systems are architected, where they fail, and why the “human in the loop” remains non-negotiable. We are looking at a landscape defined by retrieval-augmented generation, learner modeling, and strict exam alignment.

The Shift from Rule-Based to Generative Architectures

Legacy intelligent tutoring systems relied on rigid decision trees. They could not parse natural language variations. Modern AI tutors utilize large language models combined with retrieval-augmented generation.

This allows for real-time, context-aware dialogue. Instead of selecting from a pre-written bank of hints, the AI generates specific guidance based on the student’s unique error. However, this requires “constrained instructional components” to prevent hallucinations, a key feature of systems like Smart Tutor AI.

Defining the Core Entity: The Adaptive Tutor

An AI tutor is not a search engine. It is a software agent designed to maintain a student within their zone of proximal development. It accomplishes this through three distinct mechanisms.

First, learner modeling tracks what the student knows. Second, knowledge tracing predicts future performance. Third, adaptive difficulty selection ensures the student is neither bored nor overwhelmed. This creates a closed feedback loop that pure content delivery platforms lack.

Evidence of Efficacy: The RCT Data

We now have randomized controlled trials supporting these systems. Recent studies cited by Brookings show that generative AI tutors produce measurable learning gains, specifically in knowledge transfer and student engagement.

Students saved time and demonstrated higher motivation compared to traditional classroom instruction. Yet, this data comes with a caveat. The success is contingent on “pedagogically sound design,” specifically the use of Socratic questioning rather than direct answer provision.

The University Implementation: UT Sage

A practical case study is the University of Texas at Austin’s “UT Sage.” Built on AWS, this platform allows faculty to create custom tutors grounded strictly in their own course material.

The architecture uses Amazon Bedrock and follows a strict “faculty-guided” approach. The AI does not browse the open web. It retrieves information only from approved syllabi and documents, effectively eliminating the risk of off-topic or factually incorrect responses regarding course logistics.

Commercial Adoption: Pearson and AI

The private sector is rapidly scaling these tools. Pearson’s “Study Prep” uses syllabus-matching technology to create personalized plans across 25 subjects, blending video with AI chat. Meanwhile, AI serves over 29,000 active users in the UK and US. AI has moved beyond mere test prep into a “comprehensive learning ecosystem.” Its academic AI coach, “Dakota,” is trained on learning science principles, and the platform holds specific certifications for safety regarding users under 13.

The Critical Distinction: Personalization vs. Individualization

The industry often conflates two distinct concepts. Individualization merely changes the pace of pre-structured content. A student moves to the next module after finishing the current one.

True personalization alters the content, goals, and method based on the learner’s interests and preferences. Many products marketed as personalized AI tutors are merely individualized. The difference is crucial for efficacy, as true personalization requires dynamic content generation, not just a linear queue.

Pedagogical Guardrails: Teaching, Not Telling

The most successful implementations restrict the AI’s behavior. In the Harvard and Stanford studies referenced by Brookings, explicit safeguards were embedded in prompts to ensure step-by-step reasoning.

The AI is instructed not to give the final answer but to act as a “teaching agent.” This mimics high-quality human tutoring, where the goal is to guide the student to the “aha” moment rather than simply outputting the solution.

The Teacher’s Role in the AI Loop

Is the teacher obsolete? Evidence suggests the opposite. AI tutors currently lack the “socio-emotional” context and accountability mechanisms of a human educator.

Teachers using platforms like Kiwi at NYU Shanghai receive dashboards visualizing student confusion points. The AI handles repetitive low-level scaffolding, freeing the teacher to focus on mentorship and complex project-based learning. The best model is hybrid: AI for skills practice, human for connection.

The Challenge of Learner Readiness

A significant hurdle is the student’s ability to use the tool effectively. An AI tutor requires a degree of metacognition that younger or struggling students may lack.

If a student simply asks the bot for the answer repeatedly, no learning occurs. Educators report that students need training in “how to talk to the chatbot.” Without critical thinking skills, the AI becomes a crutch rather than a scaffold.

Governance and the Digital Divide

While AI tutors promise to democratize access to “elite” private tutoring, they risk widening the achievement gap. Access to devices and reliable broadband remains uneven.

Furthermore, algorithmic bias is a documented risk. If the underlying training data favors dominant cultures or dialects, the tutor may systematically disadvantage minority students. Governance frameworks requiring regular bias audits are essential for equitable deployment.

Retrieval and Hallucination Management

For an AI tutor to be trusted, it must be factually accurate. Generic models are prone to hallucination. Therefore, enterprise-grade tutors rely on Retrieval-Augmented Generation (RAG).

The AI first retrieves a specific chunk of verified text (from a textbook or syllabus) and then constructs its answer based solely on that chunk. This grounds the conversation in reality and makes the output verifiable.

The Future of Assessment

AI tutors are forcing a re-evaluation of homework. If an AI can complete the assignment instantly, the assignment loses its value as an assessment tool.

Google’s recent education paper suggests a shift away from “AI-proof” assignments toward in-class debates, oral examinations, and portfolio projects. The role of the AI tutor shifts from “homework helper” to “in-class lab partner.” ”.

Where do you currently see the biggest friction point in your institution: student over-reliance on AI or teacher reluctance to adopt the tools?

Economic Realities: Cost Savings vs. Quality

Institutions are adopting AI tutors not just for pedagogy but for budget relief. Reports are displaying 60-70% cost savings compared to traditional tutoring programs.

However, the goal should not be replacement but augmentation. The most cost-effective models use AI to handle high-volume, low-complexity tutoring (e.g., vocabulary drills), reserving expensive human tutors for complex problem-solving and emotional support.

Content Alignment and Curriculum Standards

Generic AI fails in formal education because it does not know the exam board’s specific rubric. Effective AI tutors must be curriculum-aligned.

Smart Tutor AI emphasizes this as a core design feature. The system knows not just the subject matter but also the specific learning objectives required for high-stakes testing. This ensures that personalized learning translates into actual grade improvement.

Psychological Safety and the “Patience” Factor

One underrated benefit of AI tutors is the removal of social judgment. Students who fear embarrassment in a classroom will ask clarifying questions to a patient machine.

The AI never tires of repetition. This creates a psychologically safe environment for struggling learners to articulate partial understanding without shame. This “infinite patience” is a distinct advantage over the human teacher who must manage 30 students simultaneously.

Conclusion

AI tutors and personalized education are moving into a phase of pragmatic consolidation. The winners will not be the most advanced models, but the most constrained and reliable ones. The technology works, but only when it is viewed as a governed tool rather than an autonomous agent. For educators and strategists, the task is not to marvel at the AI but to design the boundaries within which it operates. Start by auditing your assessment methods, then layer in the technology to support, not subvert, your pedagogical goals.

FAQs

Can an AI tutor fully replace a human teacher?

No. AI excels at delivering content and basic scaffolding, but it lacks the emotional intelligence, cultural responsiveness, and classroom management skills of a human. The optimal model is a hybrid.

How do AI tutors prevent students from cheating?

High-quality tutors use “Socratic” prompting to block direct answers. They also use retrieval-augmented generation to ensure responses are based on permitted materials, but they cannot block a student from using a separate, unmonitored model.

Are AI tutors safe for children under 13?

It depends on the platform. Some, like AI, have specific certifications (Goole certified for under-13 safety). Always verify COPPA compliance and data privacy policies before deployment.