Introduction

The term “AI Operating System” is appearing in boardrooms and technical roadmaps across the UK and USA, but few professionals agree on what it actually means. Some vendors use it to describe enhanced chatbots. Others market it as enterprise automation. Both miss the point. An AI operating system represents a fundamental shift in how computing resources are managed—moving from process scheduling to intent scheduling, from file management to context memory. The AI operating system is not a feature. It is the infrastructure layer that allows autonomous agents to function as first-class citizens of the web.

We are witnessing a structural transition. The internet is evolving from the “Internet of Websites” to the “Internet of AgentSites,” where each AgentSite hosts AI agents that receive tasks and deliver actionable solutions. For enterprise leaders, understanding this distinction determines whether AI investments scale or stall.

What Is an AI Operating System?

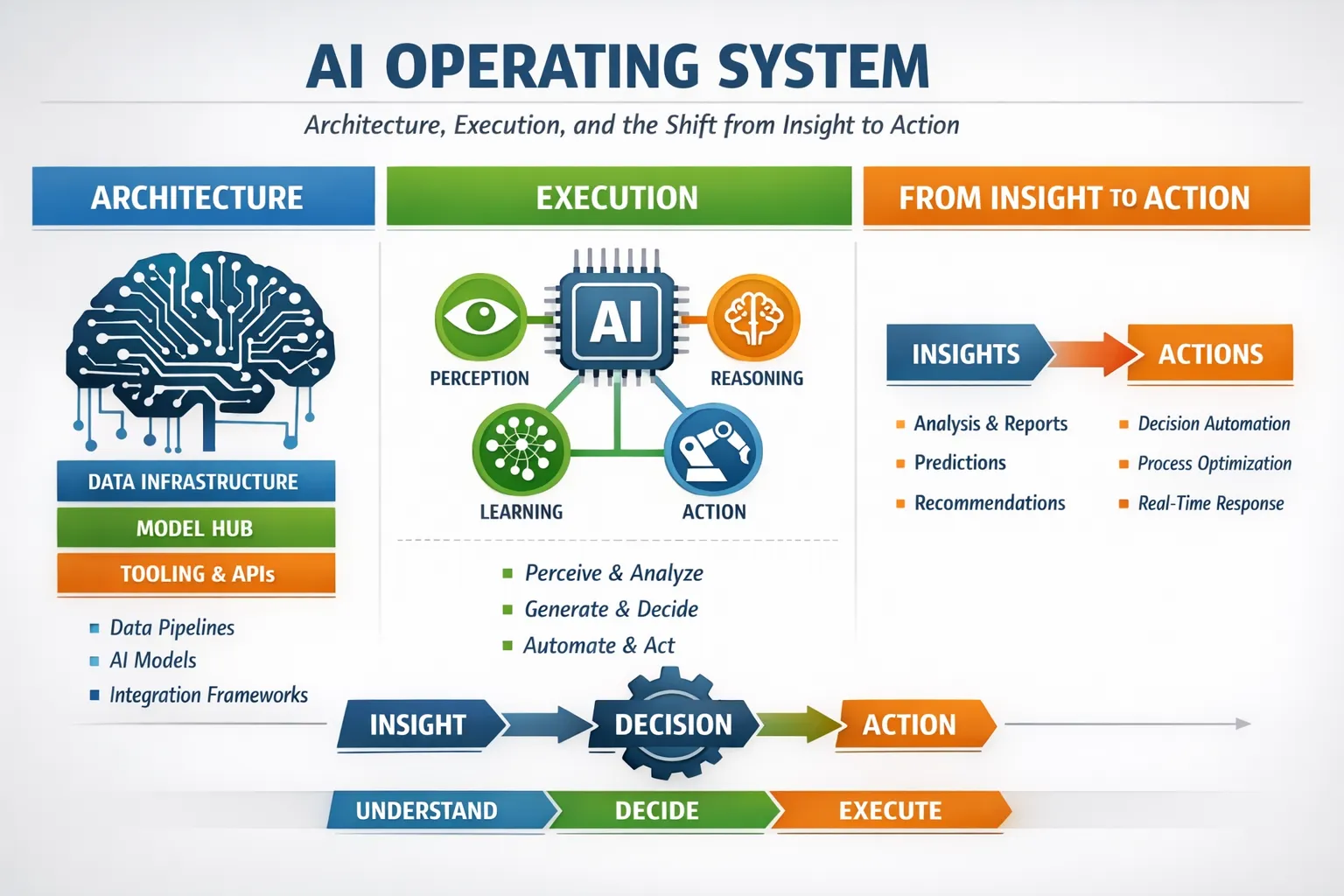

An AI operating system is a software layer that manages artificial intelligence hardware and software resources, providing shared services for AI-native programs.

Unlike a traditional OS (Windows, macOS, Linux), which schedules processes and allocates CPU cycles for human-driven clicks, an AI OS schedules intents and allocates inference for autonomous agents. It is not a “copilot” feature bolted onto a word processor. It is the foundation upon which persistent, intelligent agents operate. While a traditional OS manages files, the AI OS manages context windows and semantic memory.

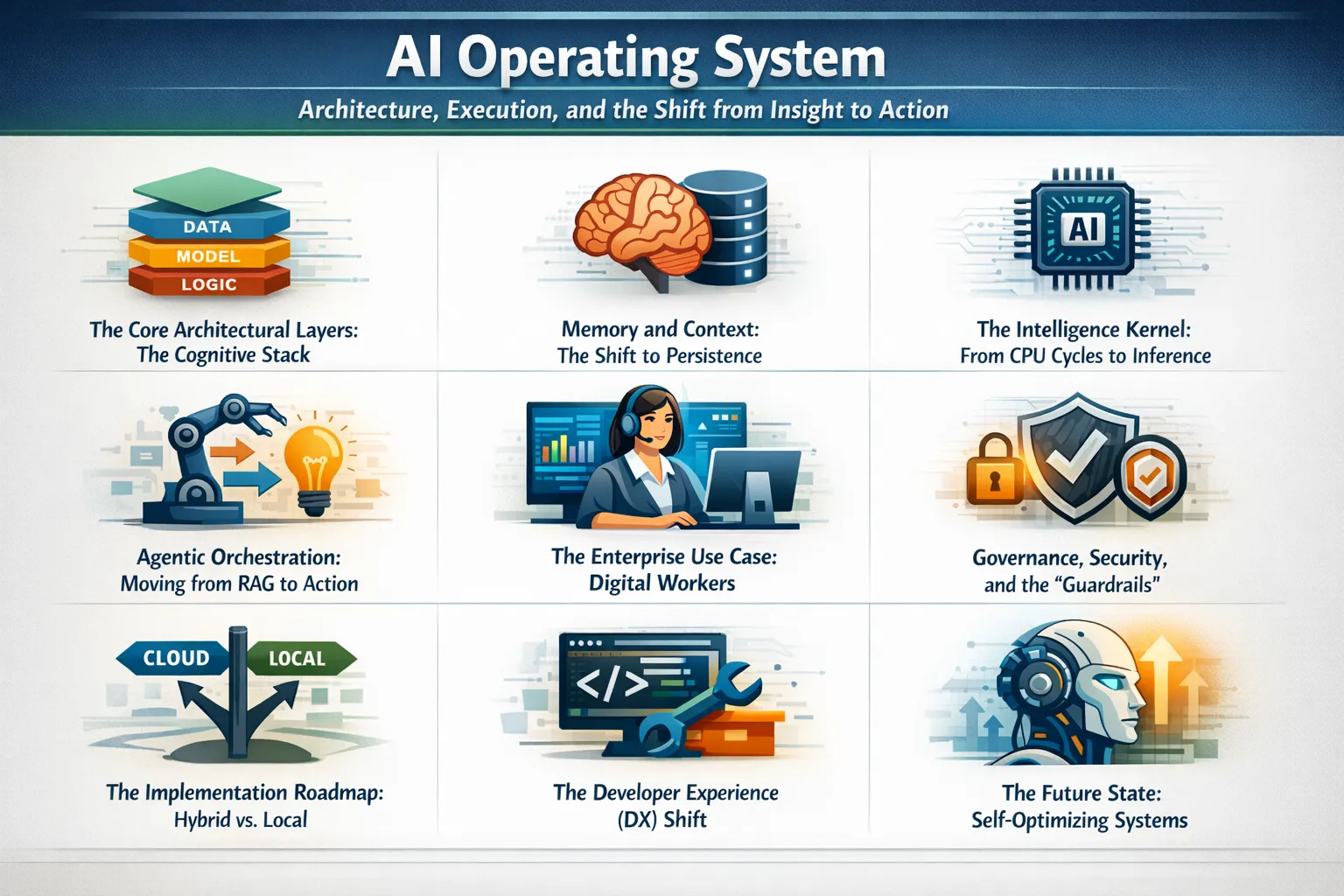

The Core Architectural Layers (The Cognitive Stack)

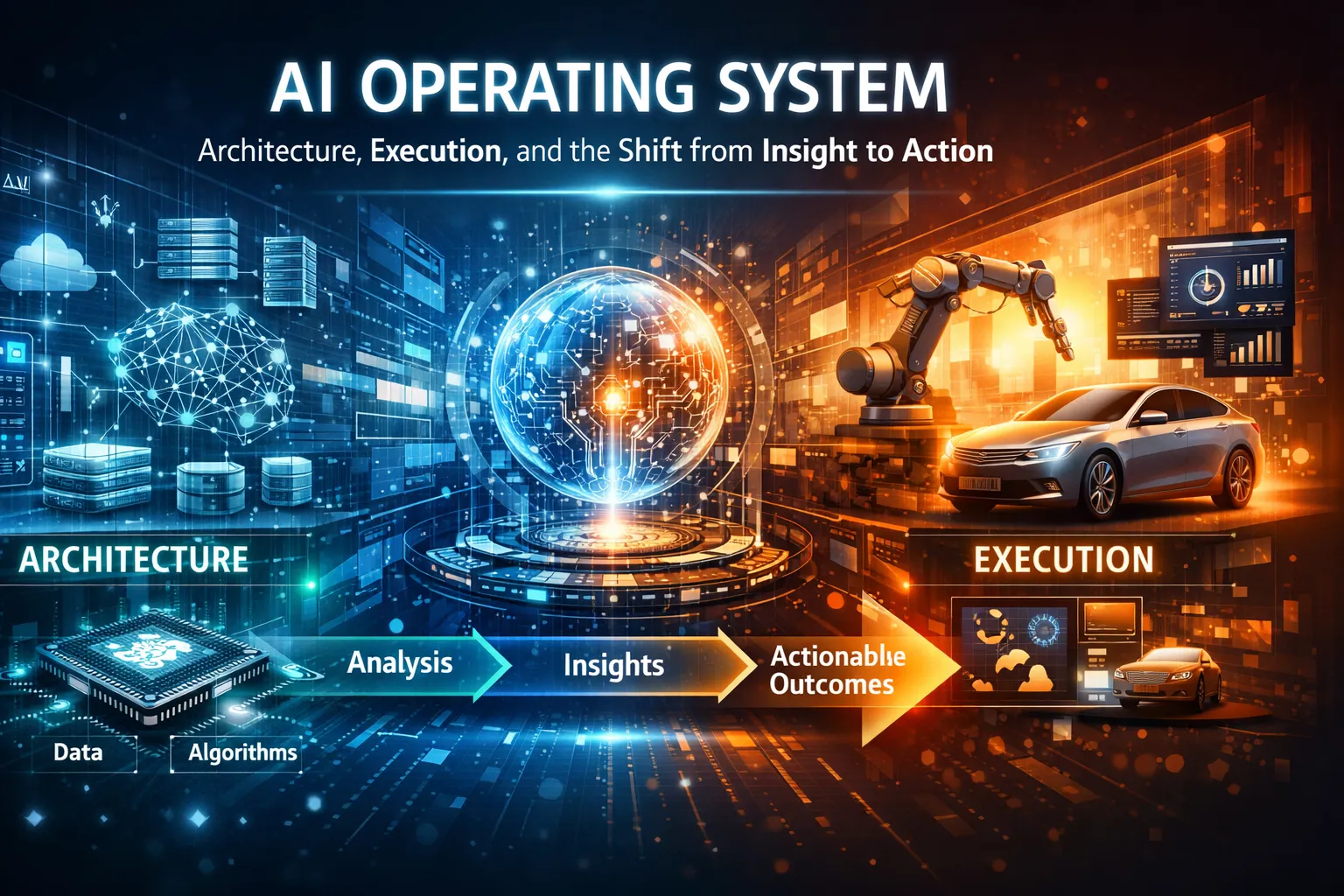

To function as a true operating system, the architecture must move beyond a simple API call to a language model. A robust AI OS comprises four distinct layers that mirror biological cognition.

The Perception Layer handles multi-modal input. This is the “driver” for sensors, translating voice, video, and raw data into embeddings the system can understand. The Cognition Layer is the “kernel.” It houses the reasoning engines (LLMs) and manages the chain-of-thought processes. The Execution Layer is the action arm, interfacing with external APIs, code sandboxes, and robotic controls to perform real-world tasks. Finally, The Orchestration Layer manages state, memory, and agent handoffs. This is where the OS decides which agent gets which resource.

Memory and Context: The Shift to Persistence

The fatal flaw of standard chatbots is amnesia. An AI Operating System is defined by its memory subsystem. It is not transactional; it is relational.

In this paradigm, the system utilizes Short-term memory for active conversations, Long-term memory (vector databases) for semantic recall of past projects, and Procedural memory for learned workflows. For example, if a developer fixes a bug in a specific way, the AI OS remembers that pattern. The next time a similar error appears, the system autonomously applies the known solution without being retrained. This transforms the OS from a tool into a learning colleague.

The Intelligence Kernel: From CPU Cycles to Inference

In a traditional OS, the kernel manages hardware. In an AI OS, the kernel manages intelligence resources. This requires a fundamentally different scheduler.

The AI kernel allocates GPU/TPU compute, manages model routing (choosing between a fast local model or a sophisticated cloud model), and handles attention tokens as a scarce resource. When a user asks a complex question, the kernel spawns “thought streams”—parallel processes that might research, write, and critique simultaneously. This is similar to how a multi-core CPU handles threads, but instead of arithmetic, it handles reasoning.

Agentic Orchestration: Moving from RAG to Action

Retrieval-Augmented Generation (RAG) is passive. It finds data. An AI OS is active. It executes workflows. Consider a global telecom provider using an enterprise AI OS. The system does not just suggest how to fix a network issue; it resolves over 40% of operational issues autonomously. It achieves this through goal-based scheduling. The user provides a high-level goal (“resolve customer churn risk”), and the OS decomposes that into sub-tasks: query CRM, analyze sentiment, generate retention offer, and execute email send. This orchestration layer requires strict governance to prevent conflicting actions.

The Enterprise Use Case: Digital Workers

The immediate driver for AI OS adoption is the creation of a reliable digital workforce. Current enterprise deployments show that AI Workers (persistent agents managed by the OS) can handle end-to-end tasks such as handling customer service calls with real-time speech reasoning or updating project trackers based on email threads. Unlike robotic process automation (RPA), which follows rigid scripts, an AI OS allows agents to handle exception logic—deviating from the script based on context. A global airline expects these agents to handle 30% of inbound calls autonomously within the first year. This is not automation of the hand; it is automation of the judgment.

Governance, Security, and the “Guardrails”

With autonomy comes significant risk. You cannot have AI workers operating in a vacuum. A production-grade AI operating system requires a “guardrails” or “helm” layer. This involves semantic firewalls that prevent prompt injection attacks, audit trails for every decision an AI agent makes, and permission controls that strictly limit which APIs an agent can touch. The “black box” problem of AI is solved by the OS logging the chain of thought. For regulated industries in the UK and USA, this auditability is the non-negotiable feature that allows AI to move from “demo” to “production.”

The Implementation Roadmap: Hybrid vs. Local

How do we build this? There are three current architectural strategies, each with a trade-off. Cloud-based AI OS offers the best models and compute but poses data sovereignty risks. Fully Local offers maximum privacy but requires high hardware costs. Hybrid is the most pragmatic path for enterprises. Here, the OS runs a local “small model” for routine data processing and privacy-sensitive tasks but seamlessly escalates complex reasoning tasks to cloud models. This optimizes for both latency and confidentiality.

The Developer Experience (DX) Shift

For developers, the AI OS changes the programming paradigm. The “API” is no longer a strict function call but a natural language protocol.

Developers will shift from writing explicit if-else logic to defining intent schemas and providing few-shot examples for the orchestrator. We are moving toward “intent-based programming.” The AI OS handles the “how,” while the developer focuses on the “what” and the “why.” However, as experts note, teams must adjust their mindset; using AI-native tools without changing agile processes can create technical debt faster than it creates value.

The Future State: Self-Optimizing Systems

The final stage of the AI OS evolution is the self-optimizing system. Future iterations will use metacognition—thinking about thinking. The OS will monitor its own performance metrics. If it notices a specific workflow is inefficient, it will rewrite its own orchestration logic. It will move from a reactive system (you ask, it does) to a predictive system (it anticipates the bottleneck and fixes it before you arrive). As one industry observer noted, we are moving from “asking the computer to work” to the computer being the worker.

Conclusion

The AI operating system represents a shift in the locus of control. For thirty years, we have trained humans to speak the language of computers (click, drag, drop, type). The AI OS finally allows the computer to understand the messiness of human intent. As these systems mature, the competitive advantage will not be determined by the size of a model but by the elegance of the orchestrator. The question is no longer whether your computer can think, but whether it can act responsibly. The era of the intelligent operating system has begun. Evaluate your orchestration strategy today to ensure you are building on a unified foundation, not a collection of experiments.

FAQs

How is an AI OS different from a copilot?

A Copilot assists a human within a single application. An AI OS manages the entire computer environment, orchestrating multiple agents and tools to complete tasks autonomously without human intervention for every step.

Is an AI operating system secure for sensitive enterprise data?

Yes, when implemented with a hybrid architecture. A secure AI OS uses local processing for sensitive data and strict governance layers (guardrails) to ensure AI agents cannot perform unauthorized actions, providing full auditability .

Do I need new hardware to run an AI OS?

It depends on the depth. For a fully local, high-performance AI OS, you need modern GPUs or TPUs. However, most enterprise AI OS solutions operate on a hybrid model, utilizing cloud compute initially while optimizing local resources for latency-sensitive tasks.