Introduction

AI data centers represent the most significant transformation in digital infrastructure since the cloud computing revolution. An AI data center is a purpose-built facility designed specifically to train and run large language models and advanced artificial intelligence workloads, distinct from conventional server farms built for web applications and enterprise IT. Understanding AI data centers matters now because they have become the primary constraint on AI innovation itself. The difference between a traditional cloud data center and an AI data center is the difference between a suburban road network and a high-speed rail system: both move things, but at radically different scales and efficiencies.

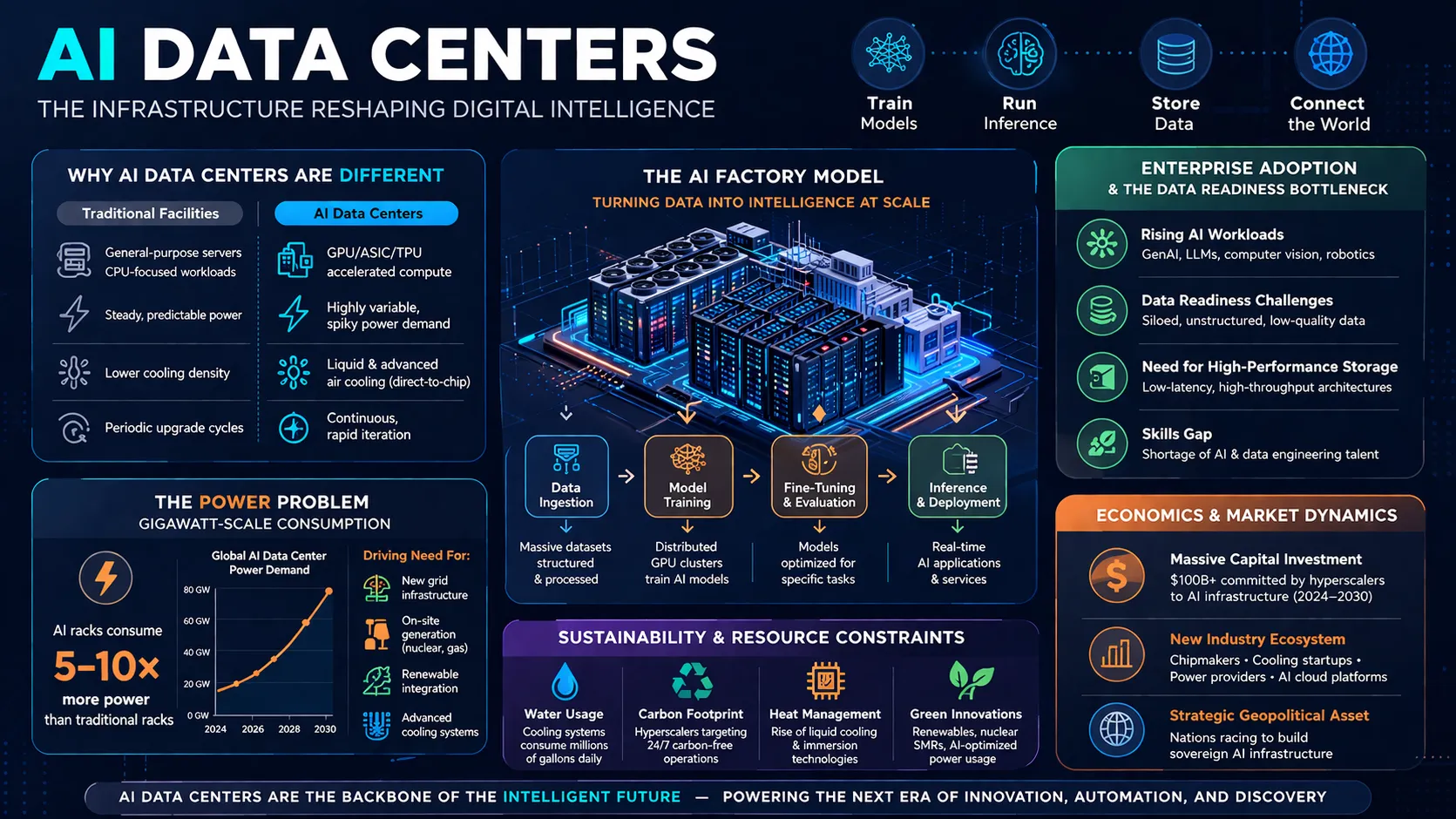

Why AI Data Centers Are Different From Traditional Facilities

Power Density as the Defining Metric

Traditional enterprise data centers operate at roughly 8-17 kilowatts per rack. AI data centers routinely exceed 120 kW per rack, with some configurations reaching 140 kW per rack. A traditional rack powers approximately 30 servers. An AI rack powers a single, tightly coupled supercomputing cluster where thousands of GPUs work on the same problem simultaneously.

Liquid Cooling Is No Longer Optional

Air cooling becomes physically impossible above approximately 40 kW per rack. AI data centers therefore require direct-to-chip liquid cooling or full immersion systems. Microsoft’s Fairwater design uses a closed-loop liquid cooling system with an initial water fill designed to last over six years, replacing water only when chemistry requires it. Some operators are exploring seawater cooling for coastal facilities.

The GPU Is the New Unit of Scale

Where conventional data centers count servers, AI data centers count GPUs. A single AI facility now houses hundreds of thousands of specialized chips connected as one giant supercomputer. Microsoft’s Fairwater sites integrate hundreds of thousands of NVIDIA Blackwell GPUs into coherent clusters, with each rack hosting up to 72 GPUs linked through NVLink fabric.

The Power Problem: Gigawatt-Scale Consumption

Historic Scale of Demand

The largest AI data centers being built today consume more than a gigawatt of electricity, enough to power midsized cities. OpenAI’s Stargate Abilene data center requires enough electricity to serve the population of Seattle and occupies land larger than 450 soccer fields. Industry projections indicate US AI data centers will collectively need 20 to 30 gigawatts of power by late 2027, representing about 5% of current US power generation capacity.

Grid Connection as Primary Constraint

In many regions, securing new high-capacity grid connections takes years. This bottleneck has fundamentally shifted where AI data centers get built. Developers are abandoning traditional hubs for locations with available transmission capacity, particularly Texas, which offers abundant natural gas and less regulatory friction. By 2028, data center energy growth is likely to increase between 6.7% and 12%, according to Lawrence Berkeley National Laboratory.

On-Site Generation and Nuclear Comeback

Some AI operators are bypassing grid delays entirely by bringing power generation to the campus. Behind-the-meter natural gas plants, solar arrays, and even nuclear facilities are being deployed specifically for AI workloads. The Three Mile Island plant, which suffered a partial meltdown in 1979, is planned to reopen in 2028 to power Microsoft’s data centers.

The AI Factory Model

From Data Center to Intelligence Factory

Industry analysts now describe AI facilities as “AI factories” rather than traditional data centers. An AI factory is a purpose-built system for AI production whose job is to transform raw data into versatile AI outputs, including text, images, code, and video, at industrial scale. This represents a fundamental stack flip from general-purpose CPU-centric systems to GPU-centric accelerated compute optimized for parallel operations.

The Three Dimensions of AI Networking

AI networking distinguishes between three distinct layers:

- Scale-up (within a chassis): Intra-node acceleration using technologies like NVLink/NVSwitch for ultra-low latency communication among GPUs in the same rack

- Scale-out (within a factory): Interconnects dozens to hundreds of thousands of GPU nodes within a single data center using Ethernet or InfiniBand

- Scale-across (between factories): Binds multiple AI sites across regions into a unified fabric for federated learning and cross-region inference

The Network as Part of Compute

In AI data centers, the network is not merely connectivity but a core component of the compute architecture. Every GPU must share computation results with every other GPU before the model updates. If any connection is slow, the entire job waits. This is why AI data centers pack GPUs densely and minimize physical cable runs.

Enterprise Adoption and the Data Readiness Bottleneck

Data Readiness Trump’s Compute Availability

According to industry experts, most stalled AI initiatives are not compute-starved but data-starved. The issue is not lack of GPUs but messy pipelines, slow ETL, and inconsistent governance. Enterprises need to design around a governed, global data pool spanning both on-premise and cloud environments to enable rapid experimentation and fine-tuning.

The Rise of AI-Certified Platforms

Enterprises are standardizing around NVIDIA reference architectures and building deeper partnerships with server and network vendors to guarantee predictable performance as they scale. The Open Compute Project has launched a dedicated AI portal featuring contributions from Meta and NVIDIA, including the Catalina AI Compute Shelf supporting up to 140kW and the NVIDIA MGX-based GB200-NVL72 platform.

Training versus Inference Infrastructure

Not all AI workloads stress infrastructure equally. Training maximizes accelerator utilization and minimizes communication stalls. Inference must handle variable traffic, deliver stable tail latency, and fail over quickly. This distinction should guide facility design decisions early, as each workload type has different requirements for memory bandwidth, network stability, and fault tolerance.

Sustainability and Resource Constraints

Water Consumption Realities

Some data centers consume as much as 500,000 gallons of water per day for cooling. However, context matters: US data centers directly used around 17.4 billion gallons of water in 2023, while agriculture used approximately 36.5 trillion gallons — around 2,000 times higher. Nevertheless, AI data centers can have significant local effects in water-scarce regions.

Beyond PUE: WUE and CUE Matter

The industry is expanding measurement beyond Power Usage Effectiveness (PUE) to include Water Usage Effectiveness (WUE) and Carbon Usage Effectiveness (CUE). The most sustainable design optimizes power, water, and carbon together, not PUE in isolation. Waterless cooling and heat reuse for district systems are becoming standard considerations.

Critical Mineral Requirements

Data centers require considerable amounts of copper, steel, and aluminum for construction, plus semiconductors, electronics, and fiber optics for equipment. Tariffs on these commodities have dramatically raised construction costs, creating tension between AI infrastructure goals and trade policy.

Economics and Market Dynamics

Investment Scale Rivals Historic Projects

By the end of 2027, AI data centers could collectively see hundreds of billions in investment, rivaling the Apollo program and Manhattan Project. Microsoft spent over $34 billion on capital expenditures in a single recent quarter, much of it on AI data centers and GPUs.

The Depreciation Challenge

Unlike dot-com-era fiber infrastructure that retained value for decades, roughly 80 percent of AI data center project cost is tied up in GPUs with uncertain lifespans measured in years, not decades. This creates financial risk if AI adoption takes longer than expected or if hardware becomes obsolete more quickly than anticipated.

Geographic Distribution

The United States leads the world in data center count, followed by Germany, the United Kingdom, China, and France. Virginia has the largest number of data centers in the US, followed by Texas and California. Northern Virginia has emerged as the “Silicon Valley” of data centers due to proximity to federal agencies and availability of land, energy, and water.

Conclusion

AI data centers are rewriting the rules of digital infrastructure. The shift from general-purpose cloud warehouses to specialized AI factories reflects a deeper truth: the physics of computation matters again. Power constraints, cooling limits, and the speed of light in fiber optics are now first-order design considerations, not afterthoughts. The organizations that succeed will not necessarily be those with the most data centers or the largest chip budgets. They will be the ones that treat infrastructure as an integrated system capable of sensing, adapting, and optimizing in real time. The question is not whether to build AI data centers, but whether you can afford to build the wrong ones. Evaluate your power strategy before your compute strategy. Everything else follows.

FAQS

How much power does an AI data center consume compared to a traditional one?

A traditional enterprise rack uses about 8-17 kW. AI racks exceed 120 kW, with large facilities consuming over a gigawatt total, enough to power a midsized city.

What is an AI factory?

An AI factory is a purpose-built system for AI production that transforms raw data into AI outputs such as text, images, and code at industrial scale, representing a fundamental shift from general-purpose CPU-centric infrastructure to GPU-centric accelerated compute.

Why can’t AI data centers use standard air cooling?

Air cooling becomes physically impossible above roughly 40 kW per rack. AI racks at 120 kW or higher requires direct-to-chip liquid cooling or full immersion systems to prevent thermal failure.